Human has a long-standing desire to travel comfortably in a vehicle. The footprints are in Bronze Age where, in 3500BC, the first wheel got invented. After that, until 1672, before Ferdinand Verbiest invented the first working steam-powered engine, animals dragged the wheel.

After two centuries, in 1886, Karl Benz built Motorwagen – the first automobile car – and received a patent on that. A lot happened in automobile industry thereafter. And by the time we entered in the 21st century, we had fast moving and fuel efficient automobiles. There are cars like Veyron with a top speed at which Boeing 777 takes off.

We, thus, satisfied our desire to travel fast and comfortable. But this comfort brought along a lots of uncomfortable things, too. Traffic congestion, pollution, and road accidents are some. In 2014 alone, 32,675 people lost their lives in a vehicle accident which means that every day on an average, 89 people were killed in the US due to a road accident.

“The significant problems we face today cannot be solved at the same level of thinking we were at when we created them.” – Albert Einstein

Sebastian Thrun connection to driving is very personal. When he was 18, he lost his best friend Harald in a road accident and years later, in 2010, his Lab Manager Suvan. Thrun is an adjunct professor at Stanford. He is an expert in AI and robotics and is the person who founded Google-X Lab which is birth place of Google Glass, Driverless car, Project Loon and Lens Project.

The driverless car of Google is your robotic chauffeur which is always there for your service. The personal quest of Sebastian Thrun to make road accidents an obsolete thing led him to think of a driverless car. Thrun led Driverless Car Project at Google with people like Chris Urmson and Anthony Levandowski.

You can relinquish controls the car computer and read new articles on TechStory instead of staring at the blinkers of the vehicle in front of your car while your daily commute to office and back.

You can relinquish controls the car computer and read new articles on TechStory instead of staring at the blinkers of the vehicle in front of your car while your daily commute to office and back.

And when you are tired after a 14 hours shift at office or partying harder on a Friday night, you can doze off at your driving seat while your car maneuvers you to home.

During the rush hours, the car finds the least congested area to drive you home. It recharges its battery by itself, goes to a garage when internal or external problems occurs, stops at signals, waits for kids to cross, takes extra care of pedestrians and saves fuel to save your money and environment. Thanks to Thrun and his team for envisaging and building such an amazing thing.

Well, I know that your curious soul will be thinking of questions like how do Google’s Driverless cars perform these functions? How it behave on the road? What are the equipment that make it smarter than a human driver? How does it decide to stop at signals? How it detects internal and external faults? Am I right? Yeah! Cool.

Related Read: A Bible On Self Driving Cars: A New Revolution

Well, I also had the same queries and to satiate my curious head, I headed to patent filings of Google on its driverless car. Why patents? Because first, I am a patent researcher and second, patents provide exclusive information where you don’t have to read between the lines.

Once, for example, I used patents to figure out what Xiaomi is up to. But that’s a different story. So there are 223 granted and 72 non-granted patent applications by Google on Driverless car which provides a lot of information on how the car functions.

Collating all that information in a single post could have made this article so lonngggg and hence I decided to give you all the information in bit and chunk through a series of posts on Google Driverless car.

In this installment, I will be letting you know the various things which car considers while driving on the road. In the next part, we will discuss what all equipment in carries under its hood and in the last part of the series, we will discuss about the user interface of the car.

So let’s start with what all things the robotic chauffeur considers while driving on the road.

The Robotic Chauffer Driving

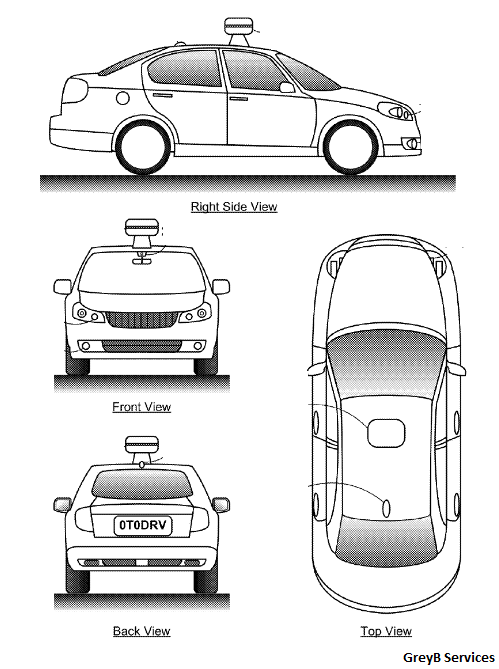

The driverless car of Google uses multiple sensors – laser, radar, localization cameras, and sonar – to detect what is there in its vicinity. The unit of these sensors is known as obstacle detection sensor units. We will discuss in details about this unit in the next post. I made this point so that you don’t get confused as I will be using the term ‘obstacle detection unit’ at multiple places in the following text.

“Self-driving cars will be far safer than human-driven cars” – Sergey Brin

Doesn’t matter whether it’s a winding and traffic-heavy roads such as the Golden Gate Bridge or San Francisco’s infamously meandering Lombard Street, the driverless car has self-driven more than 1.5 million miles. And how it’s done so, we gonna find out today. Here we go:

It Continuously Detects Vehicles and Reads Road Signs in the autonomous mode

Would you like to drive with someone who gives no importance to road signs, have no sense of what the blinkers of a car in front signify? No is an obvious answer. But you would love to relinquish the controls to Google’s robotic chauffeur as it knows the importance of road signs, blinker, etc.

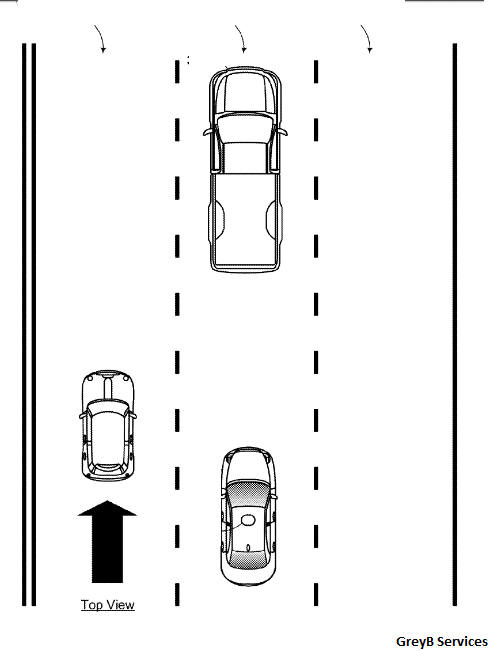

The car uses its obstacle detection unit to identify, track and predict movements of pedestrians, bicycles, and vehicles on a road. It uses the same unit to read and decipher a road sign. The sensor unit reads road signs and also provides descriptions of shapes and positions of vehicles in the vicinity of the car to the car computer.

The car computer uses this information to makes a control strategy – acceleration, deceleration, changing lane, etc.

The computer also changes car’s speed after reading a road sign. Your car, for example, will automatically go from 50 mph to 35 mph after finding a road sign reading 35 as max speed.

Apart from the sensor unit, the car computer also uses data stored in its memory to predict locations of road signs, traffic signals, and intersections.

It Predicts Expected Movement of A Vehicle

The sensor unit, other than providing shape, size and location information of a vehicle, also provides detected vehicles’ speeds, behavior on a road, bumper sticker, the amount of heat emanating, etc..

The car computer uses this information to figure out whether the vehicle moving in its vicinity is a Daimler’s Truck, a Tesla Roadster, a Harley’s Bike or a Bicyclist on his Giant.

Related Read: Things You Should Know About The Audi RS7: A Driverless Car In Racing

A two-wheeler with a Giant’s sticker going at 4mph must be a bicyclist. A vehicle with Daimler’s logo, moving at 25mph, making a growling sound while emanating a lot of heat must be a truck. A two wheeler going at 40mph while making a zig-zag should be a bike.

After determining a vehicle’s type, in the next step, the car computer uses stored behavior pattern of a vehicle on road. A truck, for example, swerves less than a biker. The computer uses this behavior pattern data to make decisions like whether to give a pass, whether to overtake, blow a horn, etc.

And yeah, the car takes note of blinkers, dippers, speed of the vehicles in its vicinity, nature of the road it’s moving on, etc.

It Makes Driving Strategy Based on A Detected Sound

Microphones installed in the car are its ears which it uses in detecting various sorts of sounds while in the autonomous mode. After receiving a sound, it detects its direction and its type to make control strategies.

The driverless car, for example, may hear a fire siren from a truck in the rear which also is flashing emergency lights. The type of the sound will make it figure out that a fire truck is approaching. The car will then make a way for the truck.

Related Read: The Brain Powered Car is Here!

In addition to the microphone, the car will use its camera to detect the type of vehicle.

It Reads Lane Marker using Its Laser

Pavement marking gives important information about the direction of traffic and where one may and may not travel. It marks traffic lanes, pedestrian crossing, indicates obstacles and indicates when it is unsafe to pass.

Reading lane marking thus becomes essential for a safe driving. And the driverless car computer uses Laser to detect and read lane marking. It can distinguish between solid or broken double or single lane lines, solid or broken lane lines, reflectors, lane markers defining the boundary of a lane, etc.

It is Also Capable of Detecting Construction Zone

The car computer uses various information sources to detect a construction zone on its route. It, for example, gathers information from traffic update services and uses its obstacle detection sensors to read road signs and lane marker to find that a construction zone is ahead.

Road sign reading Right Lane Closed Ahead, Road Work Ahead, Be Prepared to Stop, etc. signify construction going on. The car also uses its camera to figure out the geometry of a road to figure out ongoing construction.

It Can Detects whether it’s Raining, Foggy or Whether There is Snow on the Road

Harald, the close friend of Professor Sabastian, died because his car slipped due to ice on the road. This, I believe, could be the inspiration behind having the feature to detect weather conditions in the driverless car of Google.

The car uses Laser, camera, radar, precipitation sensors and real-time weather report to detect whether the road is wet, dry, snowy or there is fog around.

Rain

The driverless car uses Laser to scan a road and compare the scanned intensity with the previously stored intensity of a normal day. If the value shifts toward darker side, the computer concludes rain fall due to which the road became darker. Other than that, the car computer checks whether water is continuously kicked by vehicle’s tire, and by identifying wet tire tracks left by another vehicle.

Snow

Similarly, if the intensity value shifts toward the brighter then that signifies that the road is covered with snow and because of that it becomes brighter than a normal day.

Other than taking brightness of a road, the computer also takes the elevation or height of the roadway to identify weather conditions. For example, if the elevation is high than a normal day, there may be water or snow on the roadway.

Related Read: Apple iCar: Reality or Rumor?

Fog

The driverless car uses Radar to detect fog. It compares the real time data of Laser and Radar to detect fog. Apart from using laser and radar, the computer also images of surrounding and the sensor data from precipitation sensor to determine the weather condition.

Depending upon the conditions of weather and that of a road surface, the car computer determines whether the conditions are normal or dangerous for it to handle. Based on the analysis, it sends an alert to the driver to take controls.

It may start raining heavily during a time when a driver is sleeping and the car determines it difficult for it to drive. In such a case, the computer navigates and halts the car to a side.

(Disclaimer: This is a guest post submitted on Techstory by the mentioned authors.All the contents and images in the article have been provided to Techstory by the authors of the article. Techstory is not responsible or liable for any content in this article.)

(Top image credits: wsj.com)

About The Author:

Nitin Balodi works at GreyB Research where he with his colleagues delves on patents a lot.

Nitin Balodi works at GreyB Research where he with his colleagues delves on patents a lot.

He is an aviation freak with a callsign of ‘flanker’. He loves writing about technologies that will shape future of the humanity.