Experiments reveal

16 August, 2018

The eerie possibility of robots manipulating humans crops up in science fiction tales like Ex Machina or Battlestar Galactica. But could it happen in real life? The answer is yes, according to new research published Wednesday in Science Robotics, which found that children are particularly susceptible to robotic social influence.

A team led by Anna-Lisa Vollmer, a postdoctoral researcher at Bielefeld University’s Cluster of Excellence Cognitive Interaction Technology (CITEC), reached this conclusion by conducting a type of social conformity experiment called the Asch paradigm. Developed in the 1950s by psychologist Solomon Asch, this methodology tracks whether participants accept or defy majority opinions in a group setting, which yields insights into how peer pressure affects individuals.

These studies normally involve questioning participants in a group that has been infiltrated by “confederates,” or accomplices to the experimenters, who are instructed to push for the wrong answers. Vollmer’s team used a classic approach of displaying a vertical line, and asking participants to select a line matching its height from three comparison lines. Past Asch experiments reveal that confederates can influence a certain percentage of these groups to answer incorrectly.

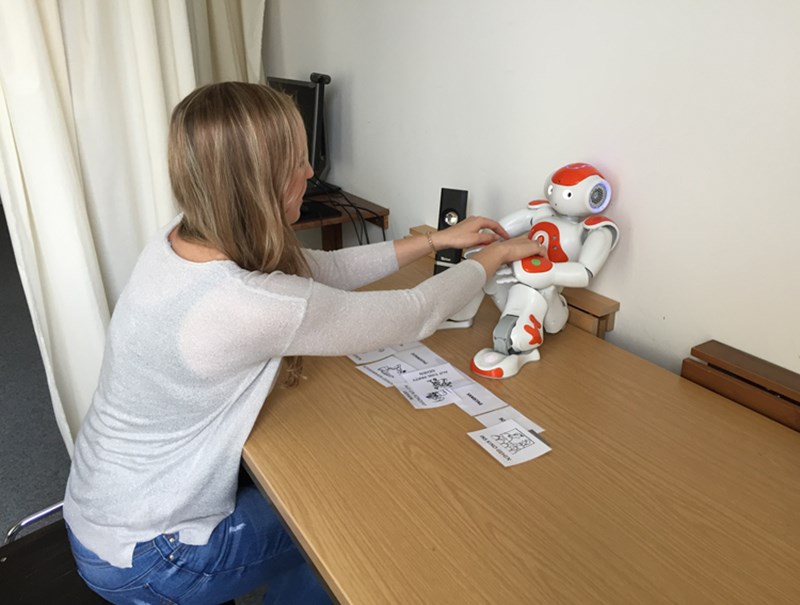

The new study adds an intriguing twist to the paradigm—it was conducted with both robot and human confederates. The researchers gathered 60 adult participants and 43 children aged seven to nine, and assigned them to three experimental setups: 1) answering questions alone, with no social influence; 2) answering questions alongside human confederates (this one was only conducted with the adult group); and 3) answering questions alongside Nao robot confederates.

The results show that adults can both be swayed into responding incorrectly by their human peers, as previously demonstrated. However, the adults resisted peer pressure from the robots. The children, meanwhile, were significantly influenced by the robot confederates. When the kids chose the wrong answers, they exactly matched the robots’ responses 74 percent of the time, suggesting that children will often conform to the opinions of the robots.

Vollmer and her colleagues spotlight some problems that robot peer pressure could cause as social robots become more integrated into child-rearing and early education. Robots that are designed to market products, or are programmed with other biases, might exert influence over children’s decisions and development. The team hopes the study sparks discussion of protective measures that would shield children from any risks inherent to robot-child interactions.

The team also hopes to conduct more experiments about robotic peer pressure on adults. Nao robots are cute and childlike, but perhaps adults would react differently to robots that looked more like them. “It would be nice to see if larger robots and even a heterogenous group of robots (different robots) can exert peer pressure on adults,” Vollmer said.

(Image:-motherboard.vice.com)