In a positive way

11th September, 2018

GIVE A MAN a fish, the well-known axiom goes, and you feed him for multi day—encourage a man to fish, and you feed him for a lifetime. Same goes for robots, with the exemption that robots feed solely on power. The issue is making sense of the most ideal approach to show them. Regularly, robots get genuinely nitty gritty coded guidelines on the most proficient method to control a specific question. Be that as it may, give it an alternate sort of protest and you’ll knock its socks off, in light of the fact that the machines aren’t extraordinary yet at learning and applying their aptitudes to things they’ve never observed.

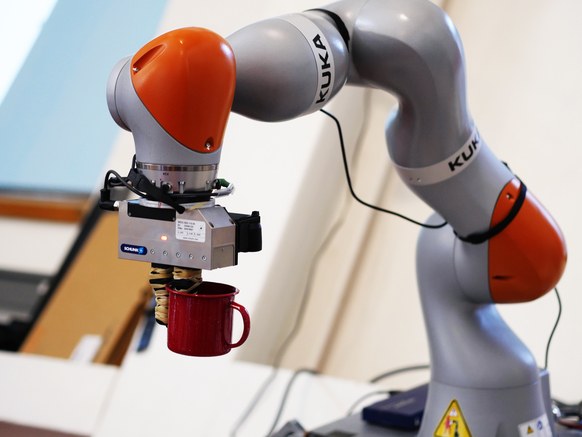

New research out of MIT is helping change that. Specialists have built up a route for a robot arm to outwardly contemplate only a bunch of various shoes, extending itself forward and backward like a snake to get a decent take a gander at all the points. At that point when the specialists drop an alternate, new sort of shoe before the robot and request that it lift it up by the tongue, the machine can recognize the tongue and give it a lift—with no human direction. They’ve trained the robot to angle for, well, boots, as in the kid’s shows. What’s more, that could be huge news for robots that are as yet attempting to take a few to get back some composure on the confounded universe of people.

Commonly, to prepare a robot you need to complete a considerable measure of hand-holding. One path is to truly joystick around to figure out how to control objects, known as impersonation learning. Or then again you can do some fortification learning, in which you let the robot attempt again and again to, say, get a square peg in a square opening. It makes irregular developments and is remunerated in a point framework when it draws nearer to the objective. That, obviously, takes a considerable measure of time. Or then again you can do a similar kind of thing in recreation, however the information that a virtual robot learns doesn’t effectively port into a certifiable machine.

This new framework is novel in that it is for the most part uninvolved. Generally, the analysts simply put shoes before the machine. “It can develop—altogether independent from anyone else, with no human help—an exceptionally nitty gritty visual model of these items,” says Pete Florence, a roboticist at the MIT Computer Science and Artificial Intelligence Laboratory and lead creator on another paper depicting the framework. You can see it at work in the GIF above.

Think about this visual model as an arrange framework, or gathering of addresses on a shoe. Or on the other hand a few shoes, for this situation, that the robot banks as its idea of how shoes are organized. So when the specialists wrap up the robot and give it a shoe it’s never observed, it has setting to work with.

(Image:- wired.com)