23 September 2016, India :

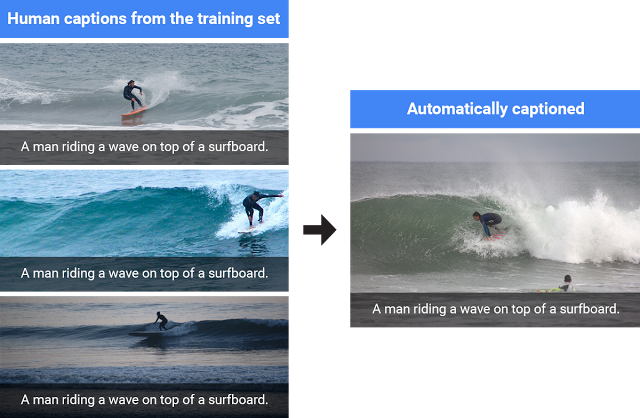

Google has open-sourced a model for its machine-learning system, called Show and Tell, which can view an image and generate accurate and original captions.

Google stated in a blog, “Today’s code release initializes the image encoder using the Inception V3 model, which achieves 93.9% accuracy on the ImageNet classification task. Initializing the image encoder with a better vision model gives the image captioning system a better ability to recognize different objects in the images, allowing it to generate more detailed and accurate descriptions. This gives an additional 2 points of improvement in the BLEU-4 metric over the system used in the captioning challenge.”

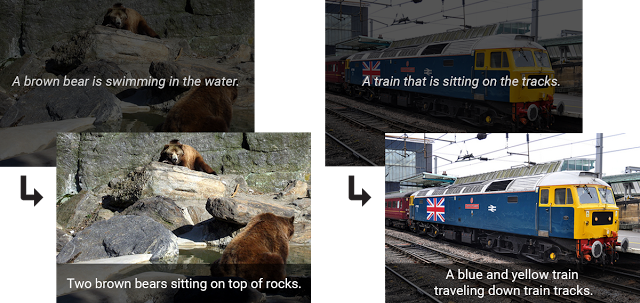

To attain such accuracy, the scientists at Google Brain Team had to train both the vision and language frameworks with captions created by real people. This enables the technology to avoid simply naming the objects in the image.

It added, “Another key improvement to the vision component comes from fine-tuning the image model. This step addresses the problem that the image encoder is initialized by a model trained to classify objects in images, whereas the goal of the captioning system is to describe the objects in images using the encodings produced by the image model. For example, an image classification model will tell you that a dog, grass and a frisbee are in the image, but a natural description should also tell you the color of the grass and how the dog relates to the frisbee.” Source and Images- Google Blog