Apple is one of the world’s biggest technology companies and we all love using our iPhones and other Apple products, don’t we? Well, not very long ago, the technology giant announced its Child Sexual Abuse Material (CSAM) detection tools that called out for major concerns and questions on child safety and exploitation.

According to recent reports, Apple has announced to push back its date to launch the feature in order to make certain improvements and clarify the concerns raised by critics. Child Sexual Abuse Material detection feature is made with an intention to protect children and stop the spread of child sexual abuse material. The feature is intended to help protect children from predators who try to exploit them via communication tools, as mentioned in a report by Engadget.

Based on feedback from customers, advocacy groups, researchers and others, we have decided to take additional time over the coming months to collect input and make improvements before releasing these critically important child safety features,” says Apple.

Originally, the controversial CSAM feature was announced to roll out with the upcoming iOS 15 update and other OS updates from Apple but not anymore. iOS 15, iPadOS 15, watchOS 8, and the one for our Mac machines, macOS Monterey will be launched this month, the expected release date for the company’s new products is said to be the 14th of September.

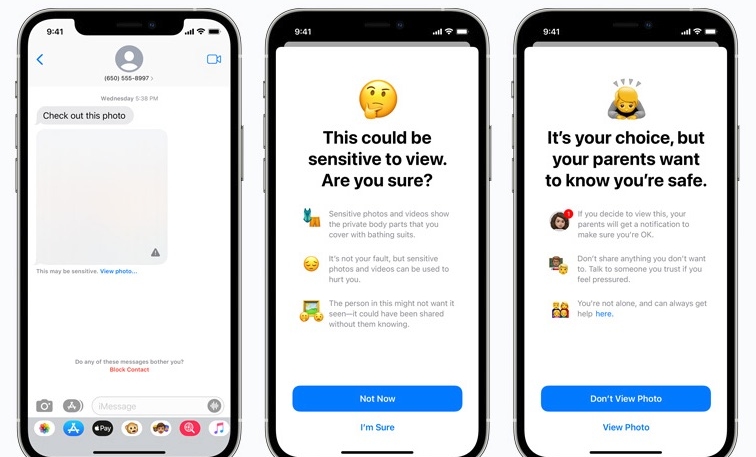

Anyhow, back to the Child Sexual Abuse Material detection feature from Apple! This feature was also supposed to be launched for iMessage where the detection tool would notify children and their parents of sexually explicit content including photos and videos that are being shared in the application using machine learning technology. This means that the new feature would constantly scan our iMessage, Photos library, and maybe iCloud backup for such content, and only then it would notify the owners. Reports suggest that sexually explicit photos shared with children will be blurry and will have a warning. Then, Apple would guide the users and direct them to appropriate sources where they can ask on how to report a CSAM case and other relevant searches, as mentioned in a report by Engadget.

Apple’s intentions with the tool are right but according to two Princeton University researchers, they developed similar technology and detection tool and they claim it to be “dangerous”. They have raised their concerns that such a system could be easily repurposed for censorship and surveillance, easily allowing authorities and bad actors to exploit Apple’s system.

Anyhow, Apple is all about user privacy and we can trust that whatever Apple will do with the CSAM system, it would be in compliance with the authorities and the system would be full-proof when it comes to the protection of children against sexually explicit content.