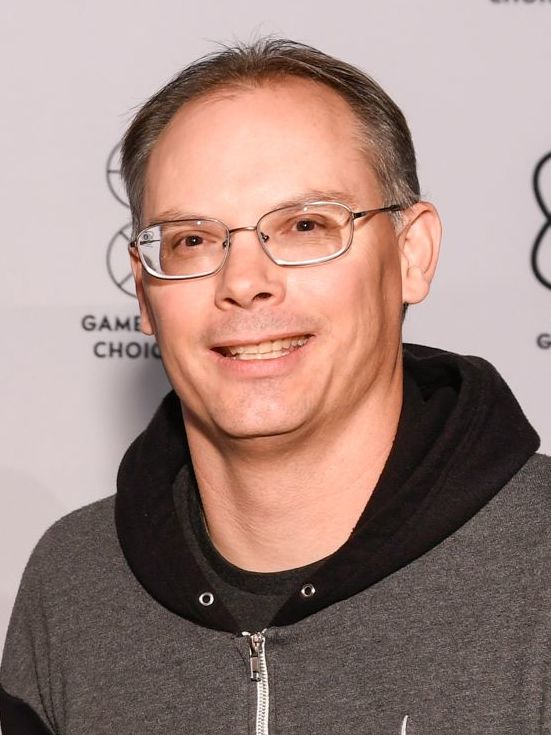

Apple Inc. has been slammed by Epic Games chief Tim Sweeney for developing spyware tools for the government. He has attacked the tech giant over it’s its new Child Safety initiatives on iMessage and iCloud Photos, among others, calling them out for apparently supporting “state surveillance.”

Anti-CSAM Tools or Government Spyware?

The jab is being taken against a new set of policies that Apple came up with on Thursday, in bid to curb the spread of child sexual abuse material (CSAM), and increase the safety of children online. Under the programme, new tools are all set to be introduced in iMessage, Siri and Search. Moreover, iCloud Photos will also be equipped with a new “scanner” which will be able to identify any CSA-related imagery. Sweeney took to Twitter to proclaim that Apple had potentially developed a new weapon to be used by governments in order to keep tabs on user data.

This isn’t the first time that the Epic Games CEO has taken to attacking the Tim Cook-led tech biggie. Ever since it has been embroiled in a legal battle against the latter over unfair practices on App Store, especially in terms of commissions and third-party payment options. This time around, Sweeney says that Apple has installed a “government spyware” on its products on a “presumption of guilt.” He further claims that the sole purpose of the tools is to keep tabs on user data, and transmit the same to the government.

The CEO continues that Apple’s move differs significantly from the content moderation measures taken by social media platforms, since instead of scanning any publicly hosted data, Apple is sneaking right into people’s personal information.

Wrongly Placed Accusations?

At the same time, his claims do seem to be in contravention of how Apple’s new tools appear to work. The system compares the mathematical hashes of the files that users store on iCloud against hashes that are known to be CSAM, instead of delving straight into the image itself. If such images are identified, a notification is forwarded to the National Center for Missing & Exploited Children (NCMEC). Moreover, the scanner can work only on iCloud Photos, and not on images that are stored locally on the phone with iCloud turned off.

Sweeney has got something to say on this as well, as he claims that Apple is making use of “dark patterns” to turn iCloud on by default, making people accumulate “unwanted data” even when they don’t want to.