Apple’s plans to imbibe a feature to facilitate the detection of child sexual abuse images on the iPhone is under the dark shadow of criticism and opposition as cyber security researchers put forth its dangers in a new study. The feature received a lot of criticism and flak from multiple cyber security experts for using “dangerous technology.” The plan which was announced in August already entailed a multitude of security and privacy concerns as users weren’t comfortable with the idea of such surveillance and intrusion into their privacy. And now with this 46-page study researchers have underscored the dangers of this plan and its negative impacts.

The What, How, and Why

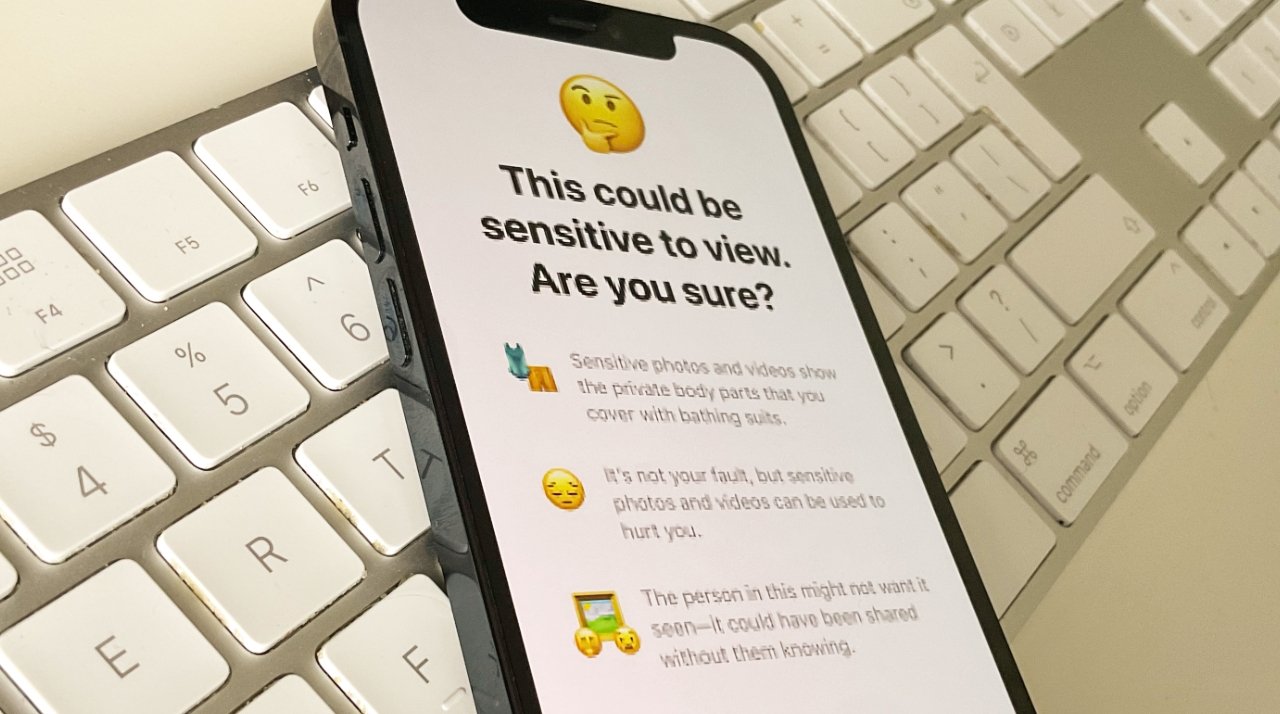

The first announcement concerning the feature came forth in August this year. The feature could scan iCloud photo libraries of users to locate child sexual abuse material. In addition to this, it also had communication safety for warning parents and children when sexually explicit photos are sent or received, and enhanced CSAM guidance in Siri and Search.

However, it seems like the feature primarily intended to ensure safety and security does the exact opposite and causes more harm than good. The researchers slam it for its intrusion into the privacy of citizens. They are of the opinion that such attempts should be halted and seen as an apparent threat to national security. This further underscores the gravity of the issue at hand. The researchers will publish their findings so as to provide ample information to the European Union about the dangers of the plan it wishes to pursue. Because the European Union is in the quest for a similar program that could facilitate the scanning of encrypted phones, not just for child sexual abuse but also to detect signs of potential crime and imagery related to terrorist activity.

The research is a reminder of the line of privacy that shouldn’t be crossed in the name of surveillance and security. Apart from the security concerns raised by the feature, the study also showed that the technology used was not quite effective in the detection of CSAM. A smart brain could easily get around the feature by making small edits to the images, thus proving the technology to be absolutely futile. Because loopholes are easy to find and if a feature aiming to ensure safety is unable to see through those loopholes, then its efficiency and effectiveness should be questioned.

Apart from the researchers, criticisms were also raised by privacy advocates, security researchers, politicians, and even the company employees who were against the deployment of such a technology. As Apple’s efforts to reassure users proved to be futile, it complied with the general demand and announced a delay on the release of the feature. The company intends to look into the matter closely and make necessary improvements to ensure safety, although how that will be done is still a mystery.