Facebook Inc., the social media conglomerate is too powerful a company to ignore even the smallest of vulnerabilities when it comes to privacy and security of users. The company is continuously doing its bit to make sure that no false information is being spread through any of its platforms and this scrutiny has increased significantly since the COVID-19 pandemic. Facebook, Twitter and Instagram have become the go-to place for users to find fake news about the novel coronavirus and its vaccination drives. The spread of misinformation is so much that people have no choice but to believe what every other person is sharing. This is a disaster for the government and people’s own safety but Facebook has been tackling the spread of misinformation on its platform very well.

Recently, the company has taken another step to curb this problem on this platform by rolling out a new test feature that will prompt users if they are sharing an article without even opening it. For instance, if I read on Facebook, “new vaccine from Oxford that will completely cure COVID-19 positive patients within 2 days” and try to share the article without even opening and reading it, Facebook’s new feature will prompt me to open and read the article before sharing it.

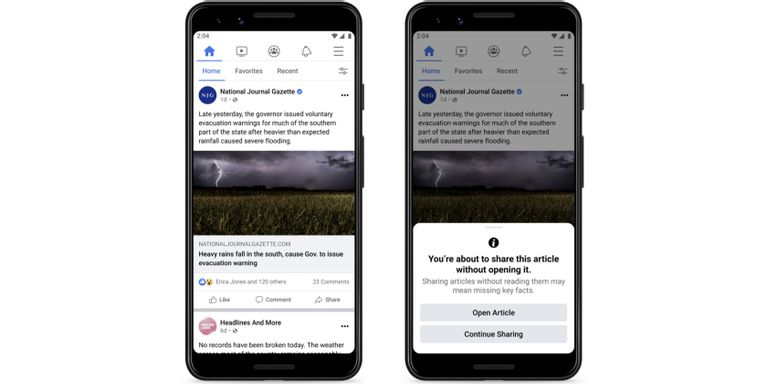

According to recent reports, Facebook will prompt, “You’re about to share this article without opening it,” “Sharing articles without reading them may mean missing key facts.” After this, Facebook will offer two options for the user to choose from, either to open the article and read it or simply repost the article as normal without reading it.

COVID-19 is just one trending example of how often people tend to spread misinformation just on the basis of a headline. However, this feature looks fairly similar to the one that Twitter employed back in September for the exact same purpose. Not just this, Twitter has also recently launched a new prompt feature that pops up every time a user is responding to someone with hate speech and abusive comments. In the matter to reduce the spread of hate speech, harassment, abusive comments and bullying from the platform, Twitter is prompting users every time the algorithm detects something fishy. Unsurprisingly, the feature is not 100% accurate and tend to prompt users even when they are using sarcasm to joke around with their friends or close ones. This feature employed by Twitter will also do a background check of the two users interacting with each other, the chances of prompting are less if the two users have been interacting before or are friends.

I guess, next up from Facebook will be a feature similar to this one as the company holds a reputation of copying the best features from other platforms.