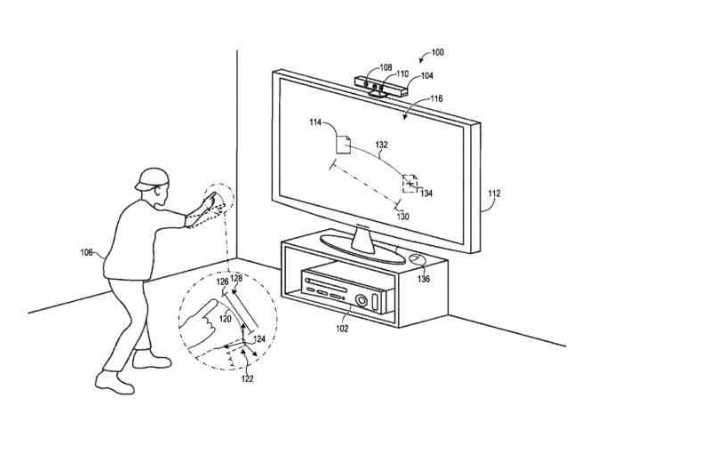

Technological advancements have led to increased user experience, especially in gaming. With the arrival of Augmented Reality based 3D gaming, there was a gap in the way gamers interacted with the devices, In order to address these gaps and enhance user experience especially in 3D gaming, Microsoft develops a three dimensional user input technology which is set to change the game.

Why this technology?

The need for this technology arises from the fact that the user wants to experience any technology to the fullest. For example, if any user is playing the game on the screen then he or she has to give input via some kind of device that makes the whole process bit sophisticated, but, Microsoft is determined to give the user a new option to give input to their device.

They could just give the input with the movement of their hand and remove the need for peripherals. Microsoft’s technology could not only be utilized in their Xbox series, but also licensed to other gaming device manufacturing companies in the future.

How does this technology work?

There are many methods that exist for receiving 3-dimensional user input. In one of the case, the position of a cursor in a user interface could be controlled on the basis of the position of the user’s hand.

Related Read: Revolutionizing Gesture Recognition Technologies: Project Soli

The position of the user’s hand could be measured relative to a fixed coordinate system origin. The cursor position is then a mirror movements of the user’s hand as they occur. But, this method is difficult to implement as it receives displacement and not just the position, as the input due to the persistent movement of the origin with respect to the user’s hand.

There are other methods of receiving three-dimensional user input like to employ a fixed origin with which three-dimensional positions could be measured. The main advantage of this method is that the fixed origins could create a sub-optimal user experience. But, this method has also a drawback as this could force the users to return to a fixed world-space position to return to the origin.

This problem could be solved by keeping the three-dimensional input in a three-dimensional coordinate system and having an origin that could be reset based on user input.

For example, the system could provide a method of processing user input in a three-dimensional coordinate system which could be comprised of a user input of an origin reset for the three-dimensional coordinate system which could be responsive to receiving the user input of the origin reset and resetting an origin of the three-dimensional coordinate system.

Now, at least one three-dimensional displacement of the three-dimensional user input relative to the origin could be measured which resulted in the movement of a user interface element displayed in a user interface.

This method offers flexibility as suitable hand gestures could be used as the user input of the origin reset which could include the hand gestures like pinching, fist clenching, hand or finger spreading, hand rotation. The other gestures performed via other body parts such as the head could also be used.

Also Read: The Future of Gaming – A Look into Virtual Reality and Online Gaming Industry!

A gesture may be selected for origin resets on the basis of the gesture being consistently identifiable and the one that does not typically occur in the course of human movement.

What’s next?

The next challenge for Microsoft will be to implement this technology in the real life scenario. They would have to decide on the number of gestures that the device could support or they could give the user a choice to choose the gestures that they would love to keep. Now, only time will tell what are the plans of Microsoft with this technology.

Also Read: What Do You Know About Pranav Mistry – The “Inventions” Guy!!

Feature Image: emaze.com