Companies have been trying to jump into virtual reality market to improve the user experience and Adobe recently had patented for a unique virtual reality headset which comes with a front head touch screen.

Why Virtual reality with the front touch screen?

The number of similar headsets that are available in the market is plenty. But, traditional virtual reality headsets have one major drawback and that is while providing input in the device.

Some virtual reality devices allow users to provide input by using one or more cameras to capture the user’s hand gestures, for example pointing gestures, pinching gestures, or even hand movements. Hand gestures, require the use of cameras and large amounts of computation, which can increase the costs associated with the virtual reality devices and also makes the process complicated.

Additionally, hand recognition is often not reliable and hardware that are designed to recognise hand motions frequently fail to recognise the quick movements or incorrectly interpret hand movement.

Also Read: Google Takes Next Step In Virtual Reality, Launches WebVR Experiments

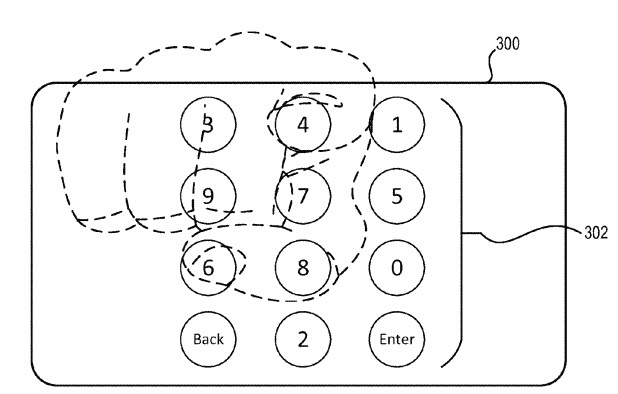

Some conventional virtual reality devices allow users to provide input to interact with a virtual reality device using touch gestures on small touch interfaces. Although these types of conventional virtual reality devices allow users to interact with a touchpad or touch sensor, the types of hand or touch gestures are often limited to a small number of gestures, and thus the types of input that a user can provide are also limited.

How does this technology work?

The virtual device includes a display screen that is secured to a housing frame and has a pair of lenses on a first side of the display screen. The virtual reality device also includes a touch interface on an outer surface which is positioned on a second side of the display screen and the second side is exactly opposite the first side.

The device detects the user interaction at the touch interface generates a response on the display screen which corresponds to the position of the user interaction at the touch interface. The area of the touch interface is mapped to an area of the display screen based on predetermined eye positions of the user.

The position of the user interaction on the touch interface corresponds to the location on the display screen so the user is able to easily select elements without guessing where the user interaction will correspond on the display screen. The visual indication also allows the user to easily locate the position of the interaction relative to the display screen.

Also Read – How Virtual Reality is Providing Customer Experience a Facelift !

What’s next?

The next thing for Adobe will be to implement this technology in the real life. They also have to ensure that the device is able to detect the touch to pinpoint accuracy. They also have to look at the cost factor. Now, we have to wait and see how Adobe implements this technology.

Feature Image: premarnd.com