Have you ever wondered what happens to a selfie that you post on social media? Data privacy activists and researchers have long cautioned that images uploaded to the Internet might be used to train artificial intelligence (AI) driven facial recognition software. These AI-enabled tools (like Clearview, AWS Rekognition, Microsoft Azure, and Face++) may be used by governments or other organizations to monitor people and draw conclusions about their religious or political preferences.

Tools Which Can Assist in Facial Recognition AI Spoofing

Using adversarial attacks – or changing input data to trigger a deep-learning model to make mistakes – researchers have devised ways to fool or spoof these AI tools, preventing them from recognizing or even detecting a selfie.

Two of these approaches were discussed at the International Conference on Learning Representations (ICLR), a leading AI conference held virtually last week. According to a study published by MIT Technology Review, most of these latest tools for fooling facial recognition software make minor changes to an image that is not apparent to the naked eye but can cause an AI to make a mistake in identifying the person or object in the image or even prevent it from recognizing the image as a selfie.

One of these “image cloaking” tools, named Fawkes, was created by Emily Wenger of the University of Chicago with her colleagues. Valeriia Cherepanova and her colleagues at the University of Maryland created the other, named LowKey.

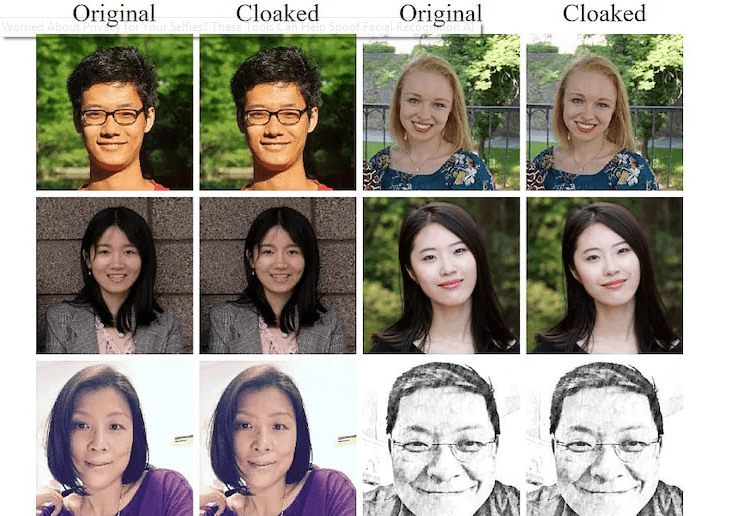

Fawkes alters images at the pixel level to prevent facial recognition systems from recognising the people in them, but leaves the image intact for humans. Fawkes was found to be 100 percent successful against commercial facial recognition systems in a limited data set of 50 images. Fawkes is available for Windows and Mac, and its approach is defined in the paper ‘Protecting Personal Privacy Against Unauthorized Deep Learning Models.’

Fawkes, however, cannot deceive existing systems that have already trained on your unprotected files, according to the writers. LowKey builds on Wenger’s framework by fine-tuning images to the point that they can fool commercial AI models, preventing them from recognizing the person in the picture. LowKey, which is defined in a paper titled ‘Leveraging Adversarial Attacks to Protect Social Media Users From Facial Recognition,’ is freely available online.

Another approach, described in a paper titled ‘Unlearnable Examples: Making Personal Data Unexploitable’ by Daniel Ma and colleagues at Deakin University in Australia, goes a step further by implementing changes to images that cause an AI model to discard it during training, preventing post-training evaluation.

Wenger points out that Fawkes was temporarily unable to fool Microsoft Azure, saying, “It suddenly became robust to cloaked images that we had created…” We have no idea what happened.” It was now a race against the AI, she said, with Fawkes later modified to be able to spoof Azure once more. “This is another cat-and-mouse arms race,” she said.

According to the study, while regulation of AI tools can help protect privacy, there will still be a “disconnect” between what is legally appropriate and what people want, and spoofing methods like Fawkes will help “fill the void.” She claims that her reason for creating this tool was simple: she wanted to give people “some control” that they didn’t have before.

Also Read:

- In August Samsung is expected to release the Galaxy S21 FE, Galaxy Z Flip 3, & Galaxy Z Fold 3

- Indian Banks are creating problems for crypto exchanges

- PS5 supply problems are expected to persist into next year, according to Sony

- Colonial Pipeline becomes victim of ransomware, forced to shut down

- The Global Computer Chip Shortage

- US FTC Says Repair Restrictions By Manufacturers Impacts Consumer Rights & Small Businesses

- For new flagships, Vivo promises three years of Android updates

- Epic Games vs. Apple: Fortnite parent reveals email evidences